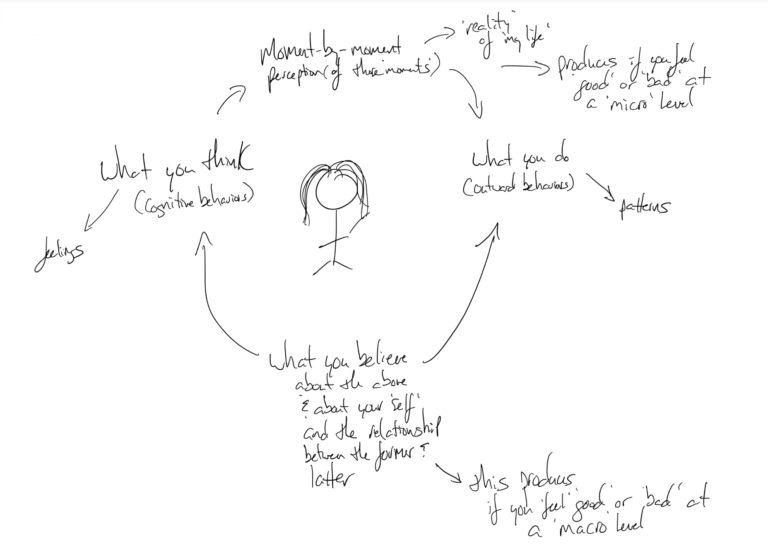

A cognitive bias is an inherent thinking ‘blind spot’ that reduces thinking accuracy and results inaccurate–and often irrational–conclusions.

Much like logical fallacies, cognitive biases can be viewed either as causes or effects but can generally be reduced to broken thinking. Not all ‘broken thinking,’ blind spots, and failures of thought are labeled, of course. But some are so common that they are given names–and once named, they’re easier to identify, emphasize, analyze, and ultimately avoid.

And that’s where this list comes in.

Cognitive Bias –> Confirmation Bias

For example, consider confirmation bias.

In What Is Confirmation Bias? we looked at this very common thinking mistake: the tendency to overvalue data and observation that fits with our existing beliefs.

The pattern is to form a theory (often based on emotion) supported with insufficient data, and then to restrict critical thinking and ongoing analysis, which is, of course, irrational. Instead, you look for data that fits your theory.

While it seems obvious enough to avoid, confirmation bias is particularly sinister cognitive bias, affecting not just intellectual debates, but relationships, personal finances, and even your physical and mental health. Racism and sexism, for example, can both be deepened by confirmation bias. If you have an opinion on gender roles, it can be tempting to look for ‘data’ from your daily life that reinforces your opinion on those roles.

This is, of course, all much more complex than the above thumbnail. The larger point, however, is that a failure of rational and critical thinking is not just ‘wrong’ but erosive and even toxic not just in academia, but at every level of society.

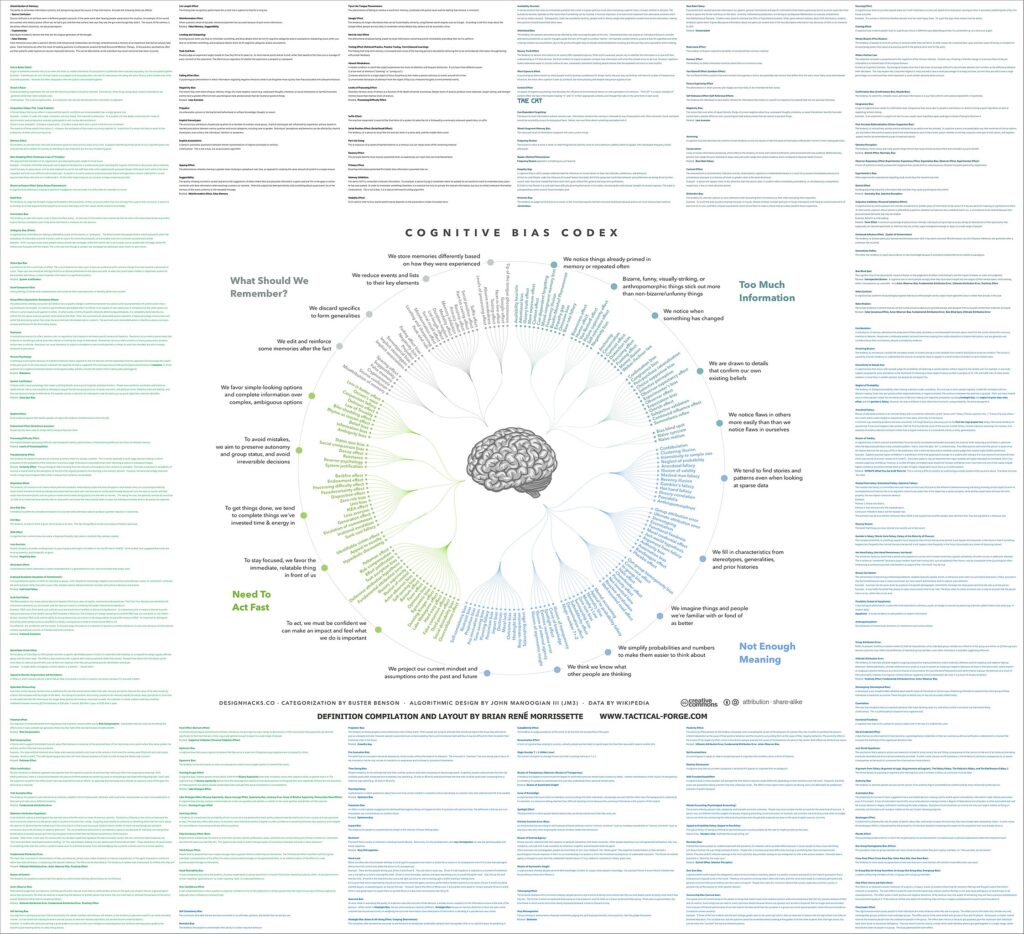

The Cognitive Bias Codex: A Visual Of 180+ Cognitive Biases

And that’s why a graphic like this is so extraordinary. In a single image, we have delineated dozens and dozens of these ‘bad cognitive patterns’ that, as a visual, underscore how commonly our thinking fails us–and a result, where we might begin to improve. Why and how to accomplish this is in a modern circumstance is at the core of TeachThought’s mission.

The graphic is structured as a circle with four quadrants categorizing the cognitive biases into four categories:

1. Too Much Information

2. Not Enough Meaning

3. Need To Act Fast

4. What Should We Remember?

We’ve listed each fallacy below, moving clockwise from ‘Too Much Information’ to ‘What Should We Remember?’ Obviously, this list isn’t exhaustive–and there are even subjectivities and cultural biases embedded within (down to some of the biases themselves–the ‘IKEA effect,’ for example). The premise, though, remains intact: What are our most common failures of rational and critical thinking, and how can we avoid them in pursuit of academic and sociocultural progress?

So take a look and let me know what you think. There’s even an updated version of this graphic with all of the definitions for each of the biases–which I personally love, but is difficult to read. You can find it at the bottom of this post.

Too Much Information

We notice things already primed in memory or repeated often

Availability heuristic

Attentional bias

Illusory truth effect

Mere exposure effect

Context effect

Cue-dependent forgetting

Mood-congruent memory bias

Frequency illusion

Baader-Meinhof Phenomenon

Empathy gap

Omission bias

Base rate fallacy

Bizarreness effect

Bizarre, funny, visually-striking, or anthropomorphic things stick out more than non-bizarre/unfunny things

Humor effect Von Restorff effect

Picture superiority effect

Self-relevance effect

Negativity bias

We notice when something has changed

Anchoring

Conservation

Contrast effect

Distinction effect

Focusing effect

Framing effect

Money illusion

Weber-Fechner law

Confirmation bias

We are drawn to details that confirm our own existing beliefs

Congruence bias

Post-purchase rationalization

Choice-support bias

Selective perception

Observer-expectancy effect

Experimenter’s bias

Observer effect

Exception bias

Ostrich effect

Subjective validation

Continued influence effect

Semmelweis reflex

We notice flaws in others more easily than we notice flaws in ourselves

Bias blind spot

Naive cynicism

Naive realism

Not Enough Meaning

We tend to find stories and data when looking at sparse data

Confabulation

Clustering illusion

Insensitivity to sample size

Neglect of Probability

Anecdotal fallacy

Illusion of validity

Masked man fallacy

Recency illusion

Gambler’s fallacy

Illusory correlation

Pareidolia

Anthropomorphism

We fill in characteristics from stereotypes, generalities, and prior histories

Group attribution error

Ultimate attribution error

Stereotyping

Essentialism

Functional fixedness

Moral credential effect

Just-world hypothesis

argument from fallacy

Authority bias

Automation bias

Bandwagon effect

Placebo effect

We imagine things and people we’re familiar with or fond of as better

Out-group homogeneity bias

Cross-race effect

In-group bias

Halo effect

Cheerleader effect

Positivity effect

Not invented here

Reactive devaluation

Well-traveled road effect

We simplify probabilities and numbers to make them easier to think about

Mental accounting

Appeal to probability fallacy

Normalcy bias

Murphy’s Law

Zero-sum bias

Survivorship bias

Subadditivity effect

Denomination effect

Magic number 7+-2

We think we know what other people are thinking

Illusion of transparency

Curse of knowledge

Spotlight effect

Extrinsic incentive error

Illusion of external agency

Illusion of asymmetric insight

Self-consistency bias

We project our current mindset and assumptions onto the past and future

Resistant bias

projection bias

Pro-innovation bias

Time-saving bias

Planning fallacy

Pessimism bias

Impact bias

Declinism

Moral luck

Outcome bias

Hindsight bias

Rosy retrospection

Telescoping effect

Need To Act Fast

We favor simple-looking options and complete information over complex, ambiguous options

Less-is-better effect

Occam’s razor

Conjunction fallacy

Delmore effect

Law of Triviality

Bike-shedding effect

Rhyme as reason effect

Belief bias

Information bias

Ambiguity bias

Status quo bias

To avoid mistakes, we aim to preserve autonomy and group status and avoid irreversible decisions

Social comparison bias

Decoy effect

Reactance

Reverse psychology

System justification

To get things done, we tend to complete things we’ve invested time & energy in

Backfire effect

Endowment effect

Processing difficulty effect

Pseudocertainty effect

Disposition effect

Zero-risk bias

Unit bias

IKEA effect

Loss aversion

Generation effect

Escalation of commitment

Irrational escalation

Sunk cost fallacy

Identifiable victim effect

To stay focused, we favor the immediate, relatable thing in front of us

Appeal to novelty

Hyperbolic discounting

To act, we must be confident we can make an impact and feel what we do is important

Peltzman effect

Risk compensation

Effort Justification

Trait ascription bias

Defensive attribution hypothesis

Fundamental attribution error

Illusory superiority

Illusion of control

Actor-observer bias

Self-serving bias

Barnum effect

Forer effect

Optimism effect

Egocentric effect

Dunning-Kruger effect

Lake Wobegone effect

Hard-easy effect

False consensus effect

Third-person effect

Social desirability bias

Overconfidence effect

What Should We Remember?

We store memories differently based on how they are experienced

Tip of the tongue phenomenon

Google effect

Next-in-line effect

Testing effect

Absent-mindedness

Levels of processing effect

We reduce events and lists to their key elements

Suffix effect

Serial position effect

Part-list cueing effect

Recency effect

Primary effect

Memory inhibition

Modality effect

Duration neglect

List-length effect

Serial recall effect

Misinformation effect

leveling and sharpening

Peak-end rule

We discard specifics to form generalities

Fading affect bias

Negativity bias

Prejudice

Stereotypical bias

implicit stereotypes

Implicit association

We edit and reinforce some memories after the fact

Spacing effect

Suggestibility

False memory

Cryptomnesia

Source confusion

Misattribution of memory